You are carrying around the most sophisticated computing device ever known to exist – and you probably have not thought much about it today. It sits quietly inside your skull, weighing roughly as much as a small bag of flour, consuming less power than a dim light bulb, yet doing things that would make the most advanced machines on earth simply give up. The human brain is not just impressive. It is, in many respects, still beyond our ability to fully replicate or even completely understand.

The comparison between the brain and a supercomputer is one of the most fascinating debates in science, and the results are, honestly, a little humbling for the machines. So let’s dive in – what you are about to read might just change the way you look at your own mind forever.

A Processing Power That Defies Imagination

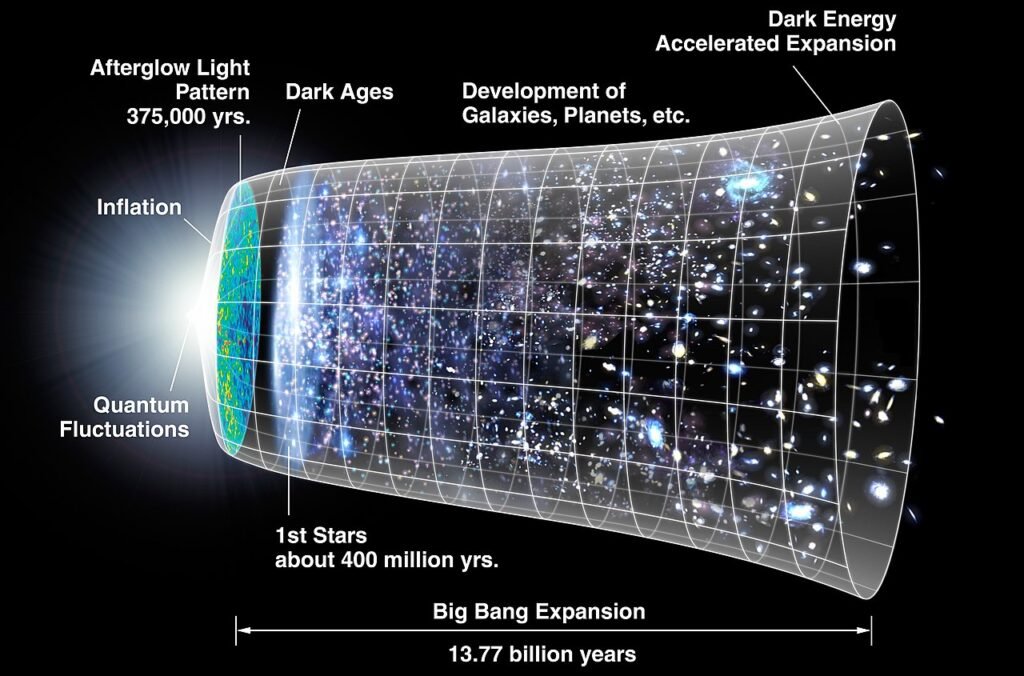

Here is something that genuinely floors people when they hear it for the first time. It is postulated that the human brain operates at roughly 1 exaFLOP, which is the equivalent of a billion billion calculations per second. That is not a typo. One billion billion. To put that in perspective, that figure puts your brain in a class beyond even the most cutting-edge machines built today.

In 2014, researchers in Japan tried to match the processing power of just one percent of the brain for a single second – and the world’s fourth-fastest supercomputer at the time, the K Computer, took a full 40 minutes to crunch the calculations. Think about that. One percent. Forty minutes. Your brain does the whole thing in one second, while you are busy wondering what to have for lunch.

The Energy Efficiency That Embarrasses Entire Data Centers

A 100 petaflop supercomputer requires roughly 15 million watts of power – enough to support a city of about 10,000 homes – and occupies an area the size of an American football field. Your brain, even when solving a difficult problem, consumes about 15 watts, roughly the power needed to keep a rather dim light bulb lit, and fits comfortably inside your head. That is an almost comical gap in efficiency.

The world’s most powerful supercomputer, the Hewlett Packard Enterprise Frontier, can perform just over one quintillion operations per second, covers 680 square meters, and requires 22.7 megawatts to run. Your brain can perform the same number of operations per second using just 20 watts of power, while weighing a mere 1.3 to 1.4 kilograms. It is like comparing a single birthday candle to a power station. The candle wins on efficiency by a landslide.

The Staggering Scale of Your Neural Network

The galaxy is home to more than 100 billion stars, possibly as many as 400 billion. Yet those inconceivable numbers pale in comparison to the roughly 100 trillion synaptic connections between brain cells. Synapses are the spaces over which neurons send and receive electrical and chemical signals. You have more connections happening in your skull than there are stars in the galaxy. Let that sink in for a moment.

In the frontal cortex alone – the part of the brain housing abilities like language – neurons can have 10,000 or more synapses on their branching dendrites, each of which may receive information from a different cell. The activity at those thousands of inputs gets added up to cause the neuron to fire, and that is how information is transferred in the brain. It is not just a network. It is a network of networks, layered in ways we are still trying to map.

Your Brain’s Memory Storage Would Stun Any Computer Engineer

Each neuron forms about 1,000 connections to other neurons, amounting to more than a trillion connections in total. If each neuron could only help store a single memory, running out of space would be a problem – you might have only a few gigabytes of storage. Yet neurons combine so that each one helps with many memories at a time, exponentially increasing the brain’s memory storage capacity to something closer to around 2.5 petabytes. That is roughly two and a half million gigabytes.

Salk Institute researchers achieved critical insight into the size of neural connections, putting the memory capacity of the brain far higher than common estimates. Their new work also answers a longstanding question as to how the brain is so energy efficient and could help engineers build computers that are incredibly powerful but also conserve energy. In other words, your brain is not just a storage marvel – it is the blueprint that future computers are trying to imitate.

Parallel Processing: The Brain’s Secret Weapon

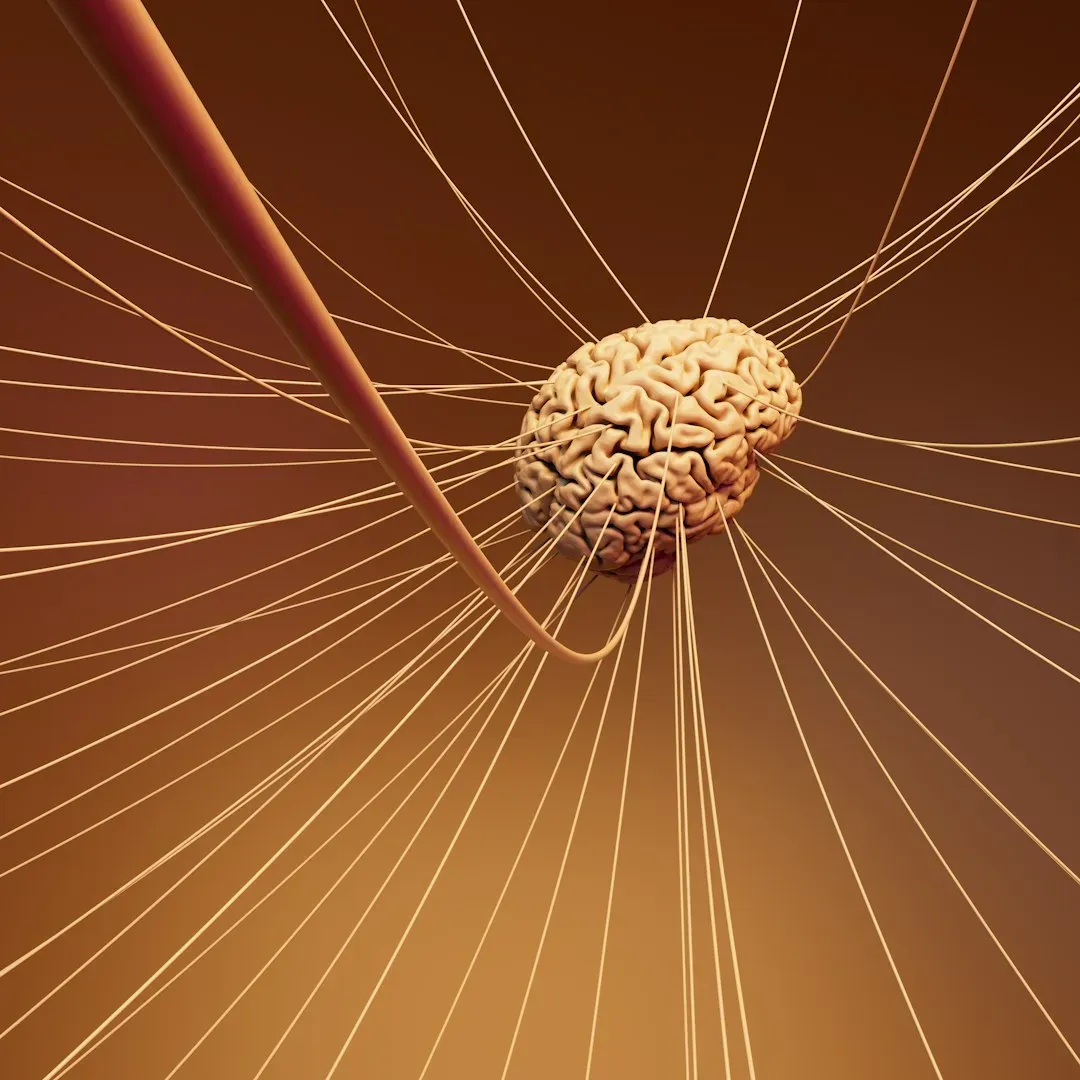

The brain is both hardware and software. The same interconnected areas, connected by billions of neurons and perhaps trillions of glial cells, can simultaneously perceive, interpret, store, analyze, and distribute information. Computers, by their very definition and basic construction, have some parts for processing and others for memory – the brain does not do this separation, which makes it enormously efficient. Think of it like a kitchen where the chef cooks, plates, tastes, and directs the staff all at once, rather than doing each step one at a time.

The brain employs massively parallel processing, taking advantage of the large number of neurons and large number of connections each neuron makes. For instance, a moving tennis ball activates many cells in the retina called photoreceptors. These signals are then transmitted to many different kinds of neurons in the retina in parallel. By the time signals have passed through just two to three synaptic connections in the retina, information about the location, direction, and speed of the ball has already been extracted and transmitted in parallel to the brain. A supercomputer would need to queue all of that sequentially. Your brain laughs at queues.

Neuroplasticity: The Feature No Computer Can Match

Neuroplasticity refers to your brain’s remarkable ability to reorganize itself by forming new neural connections throughout life. When you rewire your brain, you are essentially creating new pathways that bypass old, unhelpful patterns of thinking and behavior. Research from Harvard Medical School shows that neuroplasticity remains active well into our 80s, making brain rewiring possible at any age. No software update does that. No chip swap. The brain just quietly rebuilds itself.

We see this amazing transformative ability in a wide variety of brain functions, such as memory formation, knowledge acquisition, physical development, and even recovery from brain damage. When the brain identifies a more efficient or effective way to compute and function, it can morph and alter its physical and neuronal structure. Until we achieve true artificial intelligence – in which computers should theoretically be able to rewire themselves – neuroplasticity will always keep the human brain at least one step ahead of static supercomputers. That is a bold statement, and I honestly think it holds up.

How Neurons Communicate at Extraordinary Speed

Scientists found that synaptic depression time is much shorter in human neurons compared to those of mice. Shorter depressions allow neurons to start firing again sooner, which means human neurons can send more messages in the same amount of time. It is a fine-tuned biological system that evolution has been perfecting over millions of years. No lab has come close to replicating that elegance from scratch.

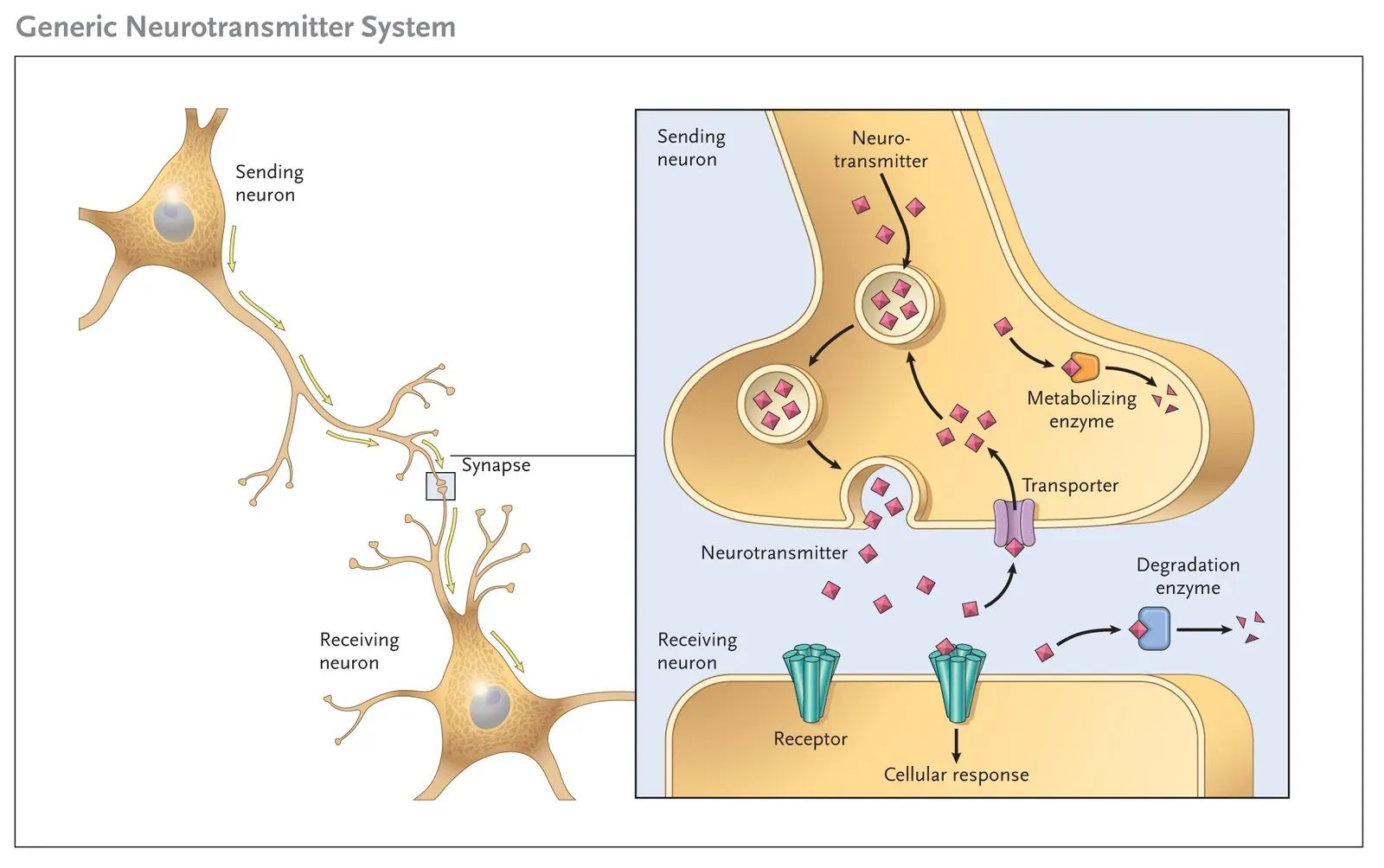

Synapses can be thought of as converting an electrical signal – the action potential – into a chemical signal in the form of neurotransmitter release, and then, upon binding of the transmitter to the postsynaptic receptor, switching the signal back again into an electrical form as charged ions flow into or out of the postsynaptic neuron. That constant electrical-chemical-electrical conversion happens trillions of times per second across your entire brain. It is a little like magic, only with chemistry.

The Race to Build a Brain-Like Supercomputer

Scientists at Western Sydney University unveiled DeepSouth, the world’s first supercomputer capable of simulating networks of neurons and synapses at the scale of the human brain. DeepSouth belongs to an approach known as neuromorphic computing, which aims to mimic the biological processes of the human brain. It is run from the International Center for Neuromorphic Systems at Western Sydney University. This is genuinely exciting – and it shows just how seriously researchers are taking the brain as a design model.

A study found that even today’s most advanced supercomputers are only about one-thirtieth as powerful as the human brain when measured using a benchmark called traversed edges per second (TEPS), which essentially measures how quickly a computer can shift information from one point to another within its own system. Even with all of humanity’s computing advances, you are still walking around with something roughly 30 times more powerful than what we can build. That is not a small gap – that is a canyon.

Conclusion

The human brain is not simply a biological computer. It is something far stranger, more dynamic, and more astonishing than that. It processes the world in parallel streams, rewires itself through experience, stores the equivalent of millions of hours of information, and does all of this on a fraction of the energy it takes to boil a kettle of water. Every time you recognize a face, catch a falling object, or learn a new skill, you are witnessing a feat that no machine on earth has fully replicated.

We live in an age of breathtaking technological progress, and yet the most powerful processing system on the planet has been sitting between your ears all along. Supercomputers are extraordinary tools – but the brain that designed them is something else entirely. It is hard not to feel a quiet sense of wonder about that.

So the next time someone tells you that machines are smarter than people, just smile. Your brain just processed that sentence in roughly a millisecond. What do you think about that?