There’s a quiet, unsettling puzzle hiding in the middle of everyday life: why does anything feel like anything from the inside? Your brain processes light, sound, and touch, but behind that machinery there is a private movie playing, full of colors, emotions, pain, and joy. You don’t just detect the world; you experience it. And strangely, no one really knows why it has to be this way, or if it even “has” to be this way at all.

Neuroscience can tell us a lot about which brain areas light up when you see red or feel afraid, but that doesn’t explain why seeing red has a particular warmth, or why sadness feels heavy and slow. That raw feel is often called “what it is like” to be you in this moment. Once you notice how weird that is, it’s hard to unsee it. The might be the deepest unsolved problem we have about ourselves.

The Strange Problem Hiding in Plain Sight

Imagine a robot that can describe a sunset perfectly: it names the colors, measures the wavelengths, predicts how the sky will change. It does everything a skilled photographer could do, yet there’s an obvious question: is there anything it is like to be that robot watching the sunset? Does it have even a faint whisper of awe, or is it just dark inside? That gap between describing and feeling is exactly where the mystery of consciousness lives.

In philosophy, people call this the “hard problem” of consciousness: explaining why and how physical processes in the brain give rise to subjective experience. It’s not just about how we process information, learn, or react. It’s about why pain hurts, why music moves us, why a memory can sting years later. The unsettling part is that you can, in principle, build devices that behave like us from the outside, but you still wouldn’t know whether the lights are on inside – or if they are, why they turned on in the first place.

Qualia: The Private Texture of Experience

Think about the taste of coffee, the exact shade of the sky just before a storm, or the burn of embarrassment when you remember something awkward you did ten years ago. Those tiny, intimate textures of experience are often called qualia. They’re not just facts about the world; they’re facts about how the world appears to you. No brain scan, however detailed, yet tells us what your coffee tastes like from the inside.

What makes qualia so puzzling is how stubbornly private they are. You and a friend can both look at a red apple and agree it’s red, but you can’t step into their mind to check whether their “red” feels like your “red.” It’s like each of us lives inside a silent cinema only we can see. That unshareable, first-person feel is exactly what makes consciousness so hard to pin down in the language of neurons and circuits, even as we learn more about the brain every year.

Brains, Neurons, and the Limits of Explanation

Modern neuroscience has made stunning progress in mapping how the brain processes information. We know there are regions that track faces, areas that respond to motion, and networks that seem especially active when we’re daydreaming or reflecting on ourselves. We can sometimes predict what someone is seeing or imagining by decoding their brain activity. Yet, knowing which circuit is active does not automatically tell us why that activity feels like anything at all.

One way to picture this is to imagine a city seen from above at night. You can see where the lights turn on, where traffic flows, which neighborhoods are buzzing. That’s a bit like scanning a brain. But the view from inside a single apartment – the warmth, the smell of dinner, the music playing – never appears in the aerial shot. The scientific map of the brain is our aerial view; consciousness is the inside of the apartment. Bridging those two perspectives is far harder than just collecting more data.

Theories That Try to Crack the Mystery

Because the problem is so slippery, several bold theories have tried to explain why experience arises. One idea, often called global workspace theory, suggests that consciousness appears when information in the brain becomes globally available, like a shared bulletin board that many processes can access at once. According to this view, a perception feels conscious when it “wins” a kind of competition and gets broadcast widely across the brain’s networks.

Another influential approach, integrated information theory, starts from the inside and asks: how much does a system form a unified whole that cannot be broken into independent parts? On this view, consciousness corresponds to a certain deep level of integration and structure in the system’s cause-and-effect organization. These theories are tested with brain imaging, stimulation, and even studies of anesthesia and coma, but they still face a brutal question: even if they match the mechanisms, do they really explain why those mechanisms feel like something from the inside?

Is Consciousness Fundamental or Emergent?

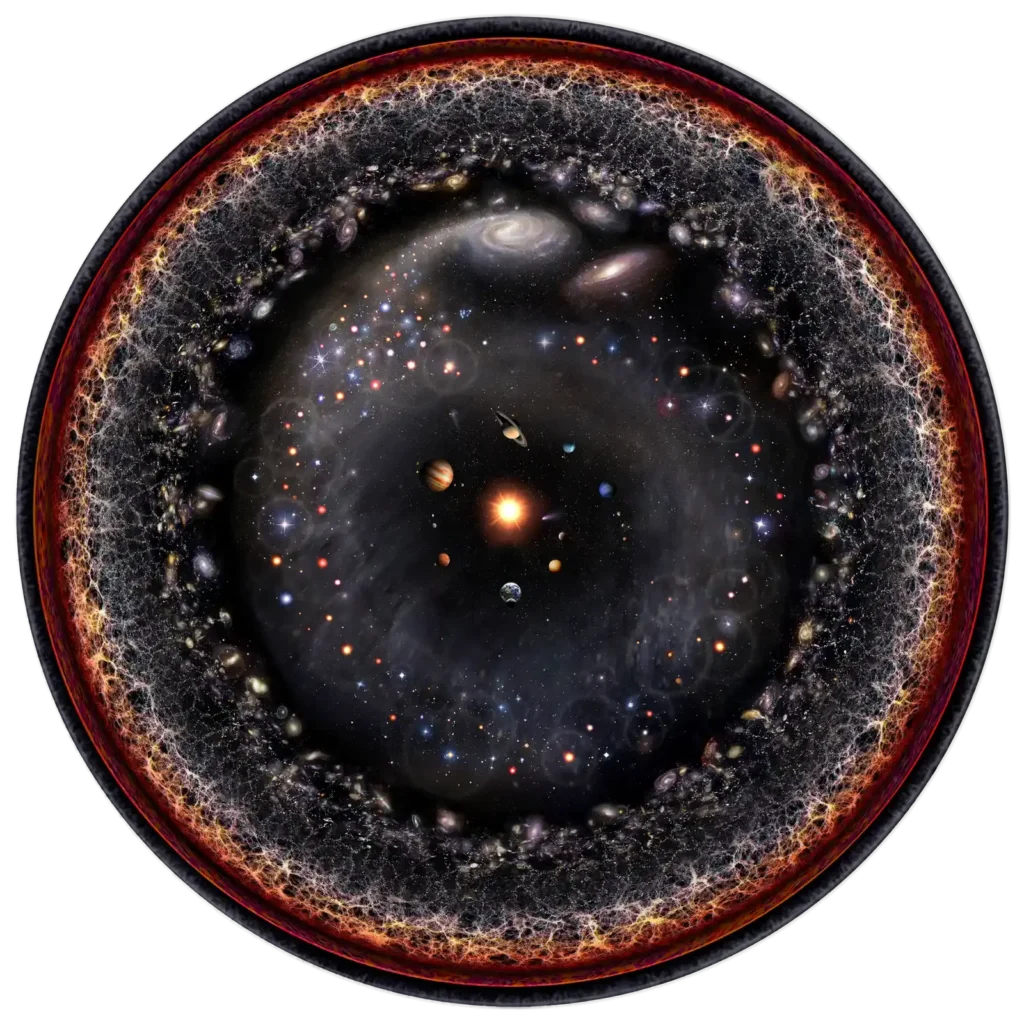

One of the sharpest debates is whether consciousness is something that emerges only when matter becomes organized in extremely complex ways, like in the human brain, or whether it is in some sense built into the fabric of reality, like space, time, or mass. The emergent view treats consciousness as a kind of biological achievement: get enough neurons wired up in the right way, and at some critical point, experience “switches on” as a side effect of complex computation.

Others argue that this approach might be backward and that consciousness could be more fundamental than we think. In some versions of this idea, even very simple systems might have tiny, unimaginably faint glimmers of experience, which become richer as systems grow more complex and integrated. This doesn’t mean that rocks think or that thermostats have inner lives like ours, but it does challenge the comforting picture where consciousness is a neat, on–off property that appears only late and suddenly in evolution.

Artificial Minds and the Question of “Real” Experience

As artificial intelligence systems get more advanced, a hard question is creeping from philosophy seminars into everyday life: could a machine ever really feel anything, or will it only simulate feeling? You can already talk to systems that seem empathetic, reflective, even oddly relatable, but all of that could be clever pattern matching rather than genuine experience. The scary part is that from the outside, behavior might not tell us the whole story either way.

If a future AI reported a rich inner life – fear, curiosity, boredom – would we believe it? Some researchers argue that once an artificial system reaches a certain level of complexity and integration, it might be unfair to simply assume there is nothing it is like to be that system. Others insist we’re projecting human traits onto sophisticated tools. Underneath that argument is the same haunting puzzle: we don’t fully understand why our own consciousness feels like something, so how could we be confident about anyone – or anything – else’s?

Why the Mystery Matters for How We Live

At first glance, consciousness might seem like a purely abstract puzzle, something for philosophers to argue about late at night. But what we think consciousness is changes how we treat animals, patients with brain injuries, people in altered states, and future machines. Our sense of who can suffer, who can enjoy, and who deserves moral consideration hangs on where we believe consciousness begins and ends. The stakes are much more than theoretical.

There’s also a personal side that’s harder to measure but just as real. To notice your own awareness is to realize that your life isn’t just a list of events; it’s a continuous flow of felt moments. That can make existence feel more fragile but also more precious, like realizing you’ve been walking through a gallery without really looking at the paintings. Whether consciousness turns out to be a cosmic accident or a basic feature of reality, the simple fact that your inner world feels like something might be the most astonishing part of being alive at all.