Every so often, science lurches forward with a finding that forces us to redraw the map of reality. The latest candidate is a proposed that links the way energy flows through systems to how information is stored and erased within them. At first glance it sounds abstract, the kind of thing that lives on whiteboards and in dense equations, but this breakthrough reaches straight into the beating heart of nature, from star-forming galaxies to the slow, stubborn lives of giant tortoises. Researchers argue that this law may explain why some animals manage to bend the rules of aging, and why life itself seems so good at cheating chaos. In the process, it pushes physics deeper into the messy, beautiful world of biology than ever before.

The Hidden Clues

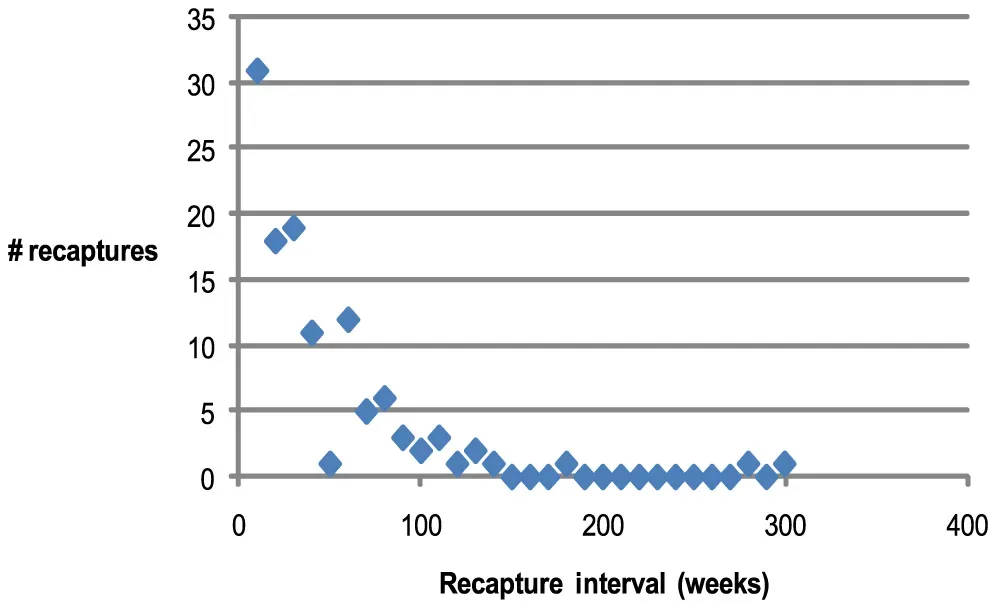

It started, oddly enough, with a puzzle about why some creatures age so slowly that they almost seem to ignore time. Bowhead whales can live for over two centuries, Greenland sharks far longer, and certain turtles appear to barely increase their risk of death as they get older. Biologists have cataloged these outliers for years, but their longevity never fit cleanly into the traditional physics of wear, tear, and entropy. A group of physicists and biophysicists began asking a provocative question: what if living systems follow an extra rule about how they handle energy and information?

When they looked closely, they found patterns that did not sit comfortably inside existing thermodynamic laws alone. Long-lived animals, from clams to salamanders, invest heavily in keeping their internal information – DNA, proteins, cell structures – remarkably well organized over long times. That “information maintenance” carries an energy cost, yet it seems to pay off by slowing the slide into chaos that usually accompanies aging. These clues suggested there might be a deeper quantitative principle at work, one that treats information as a physical resource, not just an abstract idea.

From Ancient Tools to Modern Science

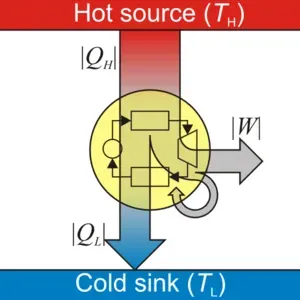

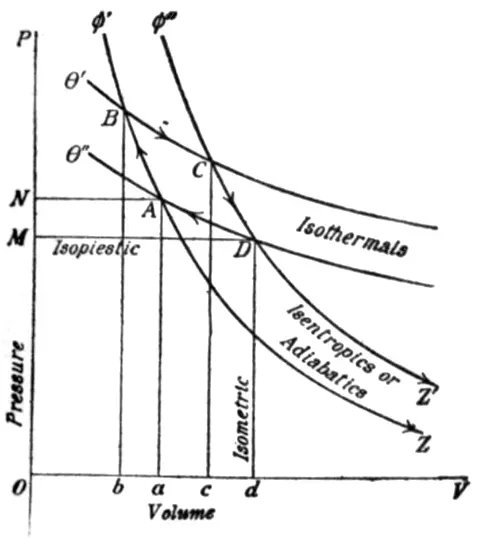

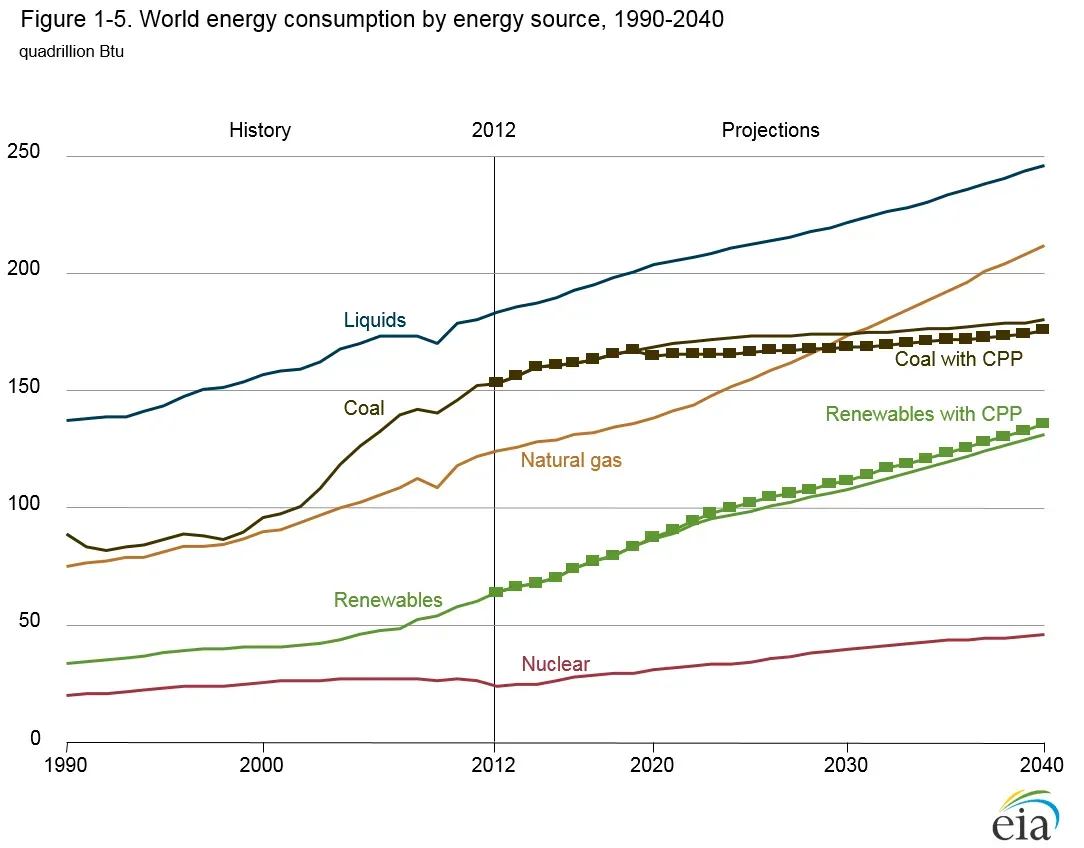

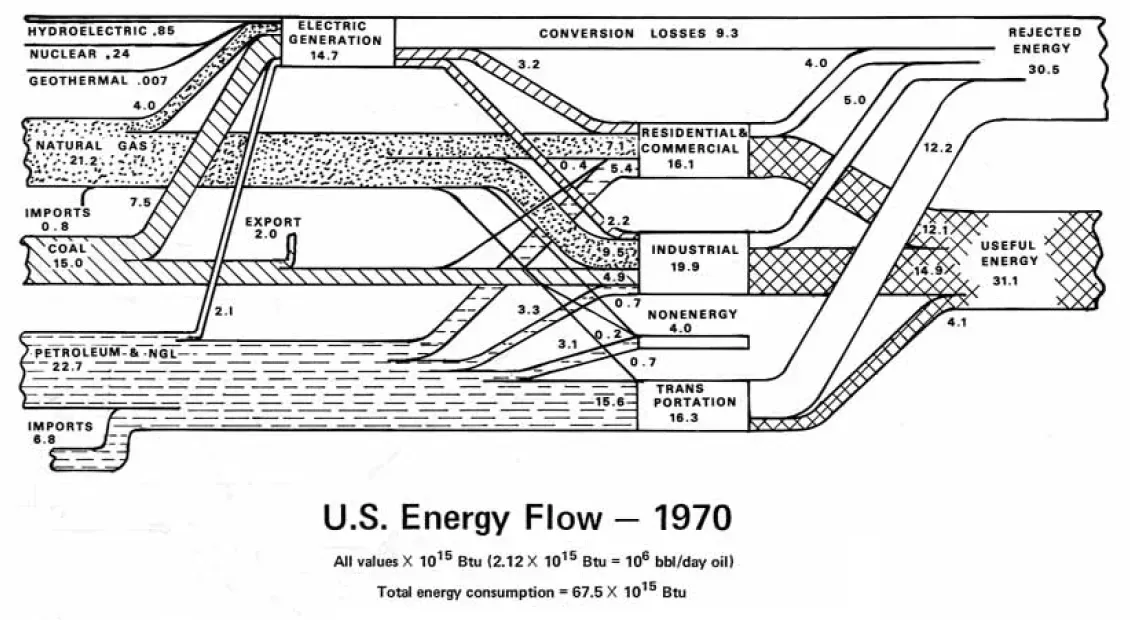

The road to this proposed new law runs through the very old language of thermodynamics, the field that began with steam engines and coal. Nineteenth-century physicists were trying to make trains run better, not decode the secrets of jellyfish or bowhead whales, but they stumbled on deep truths: energy is conserved, and any use of it comes with an increase in disorder, or entropy. For over a century these laws have guided everything from engine design to cosmology. Yet life always looked slightly out of place in that framework, like a defiant note in an otherwise orderly symphony.

In the twentieth century, information theory quietly joined the conversation. Physicists realized that erasing one bit of information has a minimum energy cost, tying the abstract world of bits to the gritty world of heat. What the new work does is weave these threads together into a single, sharper statement: in any system capable of processing information – cells, brains, even ecosystems – the rate at which useful information is preserved is tightly coupled to the energy the system must burn and the entropy it must export to its surroundings. In other words, if you want to remember, adapt, and stay organized in a chaotic universe, you have to pay a very specific physical price.

Strange Science at the Edge of Life

At the center of this story is a deceptively simple equation relating three quantities: how much energy a living system takes in, how much disorder it dumps back out, and how efficiently it preserves internal information about its own state. Researchers tested the idea on models of cellular networks and on real data sets from long-lived versus short-lived species. They found that organisms which age slowly tend to sit close to an optimal balance predicted by the new rule, investing energy into high-fidelity repair and error correction. Fast-lived species, like small rodents, lean into rapid reproduction at the cost of long-term information maintenance.

What sounds like a dry optimization principle quickly becomes strange science when you apply it to nature’s oddities. Consider naked mole-rats, which live far longer than other rodents of similar size, or certain jellyfish that can revert to younger stages. Their cellular machinery behaves as if it is fiercely committed to keeping internal information intact, even when that means operating with slim margins of energy. In laboratory models, tweaking the energy–information balance can push simulated organisms toward either fast-burning, short lives or slow, persistent ones that hug the predictions of the new law. The result is a physics-flavored explanation for why evolution seems to discover similar longevity tricks in wildly different species.

How a Law of Physics Touches Animal Longevity

The boldest claim behind this work is that longevity is not just a biological quirk but a physical strategy that falls out of this new law. Long-lived animals are not simply lucky winners of the genetic lottery; they are systems that have organized themselves to spend energy in very particular ways. They funnel resources into maintaining low-entropy internal states, like pristine DNA repair and robust protein folding, instead of maximizing short-term growth. That choice shows up as slower reproduction, delayed maturity, and other life history traits that ecologists have documented for decades.

What is new is the idea that you can, in principle, quantify this trade-off with a single, law-like relationship between energy input, entropy export, and information retention. In population studies, species with exceptional lifespans often live in relatively stable environments where long-term information is valuable and can be reused. In harsher, more unpredictable settings, the math tilts in favor of sprinting through life and scattering offspring widely. The emerging picture is almost unsettling: from whales to worms, life seems to be playing variations on the same physical score, adjusting how fiercely it fights entropy based on what the environment rewards.

Why It Matters

On the surface, might sound like something that belongs only in textbooks, but its implications run through medicine, ecology, and even how we think about our own mortality. If aging is partly governed by a fundamental trade-off between energy use and information maintenance, then interventions that extend lifespan are not just “hacks” but shifts in where an organism sits on that physical curve. That could clarify why some anti-aging treatments show promise in short-lived lab animals yet fail to move the needle much in humans, whose biology is already tuned closer to long-lived strategies. It also suggests that there may be hard physical limits to how far we can push longevity without paying steep costs elsewhere.

Compared with traditional approaches that focus only on genes or single biochemical pathways, this framework zooms out to ask what is physically possible for any system that lives, remembers, and adapts. It nudges us away from searching for one magic molecule and toward understanding how entire networks handle energy and information. In my own reporting, I have seen how this kind of shift can reframe debates overnight: what used to look like random quirks of evolution begin to appear as different ways of solving the same equation. For a field often stuck between poetic metaphors of “wearing out” and the brutal math of entropy, that is a quietly radical change.

Peering Into the Data Behind the Discovery

Under the hood, the proposed law rests on a blend of theoretical work and surprisingly grounded measurements. Physicists used tools from stochastic thermodynamics to track how microscopic fluctuations in energy use relate to changes in information content, whether in a protein network or a patch of neural tissue. Then they compared these predictions with real biological cases: how often critical errors arise in DNA over an animal’s lifespan, how quickly damaged proteins are cleared, how stable certain cellular signals remain under stress. Across multiple systems, they kept finding that the efficiency of information maintenance clustered near the bounds set by the new rule.

To make the story more concrete, imagine three rough bullet-point patterns that emerged repeatedly:

- Short-lived species tend to devote a larger share of energy to rapid growth and reproduction rather than long-term error correction.

- Long-lived animals show unusually low rates of molecular damage accumulation relative to their metabolic output, hinting at more efficient information maintenance.

- Cells in long-lived species often operate closer to theoretical limits on how little energy is needed to correct a given amount of informational “noise.”

None of this means the law is beyond challenge – far from it. But it does mean that across scales, from single cells to whole organisms, the same energy–information fingerprint keeps showing up, which is exactly what you would hope to see if you had stumbled onto a genuine physical principle.

Global Perspectives on a Universal Rule

One of the most striking aspects of this work is how it bridges communities that rarely speak the same language. Physicists look at the new law and see echoes of the principles that govern black holes and quantum measurements, where information has long been a central obsession. Biologists see a possible scaffolding that might unify puzzling observations from fields as disparate as comparative longevity, immune system resilience, and brain plasticity. Ecologists, meanwhile, are starting to ask whether entire ecosystems might also hover near similar balances of energy flow and information preservation.

Viewed globally, this matters because the conditions that shape how organisms manage energy and information are changing fast. Warmer oceans, more frequent heat waves, and shifting food webs all alter the energy budgets that species rely on. If the new law is right, then climate change is not just stressing animals; it is shoving them into different regions of the energy–information landscape, potentially forcing life-history strategies to flip. A coral or seabird species finely tuned to a long-lived, information-rich strategy could suddenly find that the math no longer works in its favor. That raises unsettling questions about which kinds of lives will be favored in a hotter, more chaotic world.

The Future Landscape of Physics and Life

Looking ahead, the most exciting part of this story is how testable it might become. Advances in single-cell sequencing, live-cell imaging, and high-throughput metabolism measurements are giving scientists unprecedented views of how energy and information flow through living systems in real time. If researchers can track, for example, exactly how much energy a neuron spends to keep its signaling reliable over decades, they can plug those numbers straight into the proposed law. Similarly, engineered microbes or organoids could be pushed into different energy regimes in the lab to see whether their information-handling efficiency moves along the predicted curve.

There are also technological echoes waiting in the wings. Computer engineers are already obsessed with energy-efficient information processing, and some are eyeing biological systems as templates for next-generation hardware. A law that quantifies the absolute limits of information maintenance per unit of energy could guide everything from low-power AI chips to autonomous sensor networks in remote environments. On a more speculative front, astrobiologists are asking whether such a law might help identify life beyond Earth by flagging systems where energy and information behave in telltale, life-like patterns. In that sense, what began as a curiosity about aging animals may end up reshaping how we search for company in the universe.

How You Can Engage With This New View of Life

For most of us, the math behind will remain safely on the page, but the questions it raises are deeply personal. It invites you to look at your own body as an information-keeping machine, constantly spending energy to repair, remember, and adapt. Simple choices – sleep, nutrition, movement, stress – are ways of nudging your cells toward better or worse information maintenance, even if the effect is subtle. Paying attention to species that embody different strategies, from short-lived insects to century-old trees, can also change how you see the living world around you.

If this kind of research speaks to you, there are straightforward ways to stay involved. You can support organizations that fund fundamental science, including work at the border of physics and biology, where most breakthroughs like this arise. You can encourage science education and outreach in your community so that the next generation of researchers feels comfortable crossing disciplinary lines. And you can keep following the story as new data test, refine, or even overturn this proposed law. In the end, the universe is under no obligation to make sense to us – but when it does, even a little, it is worth paying close attention.

Suhail Ahmed is a passionate digital professional and nature enthusiast with over 8 years of experience in content strategy, SEO, web development, and digital operations. Alongside his freelance journey, Suhail actively contributes to nature and wildlife platforms like Discover Wildlife, where he channels his curiosity for the planet into engaging, educational storytelling.

With a strong background in managing digital ecosystems — from ecommerce stores and WordPress websites to social media and automation — Suhail merges technical precision with creative insight. His content reflects a rare balance: SEO-friendly yet deeply human, data-informed yet emotionally resonant.

Driven by a love for discovery and storytelling, Suhail believes in using digital platforms to amplify causes that matter — especially those protecting Earth’s biodiversity and inspiring sustainable living. Whether he’s managing online projects or crafting wildlife content, his goal remains the same: to inform, inspire, and leave a positive digital footprint.