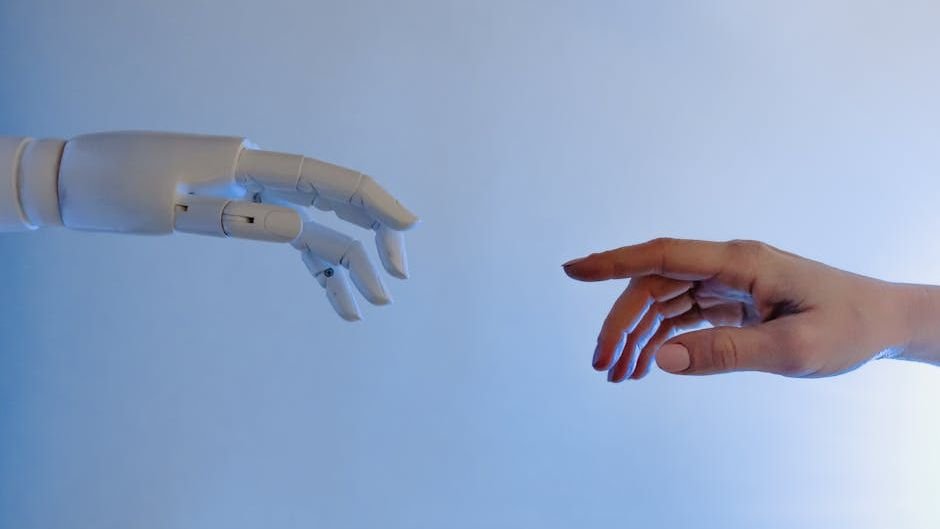

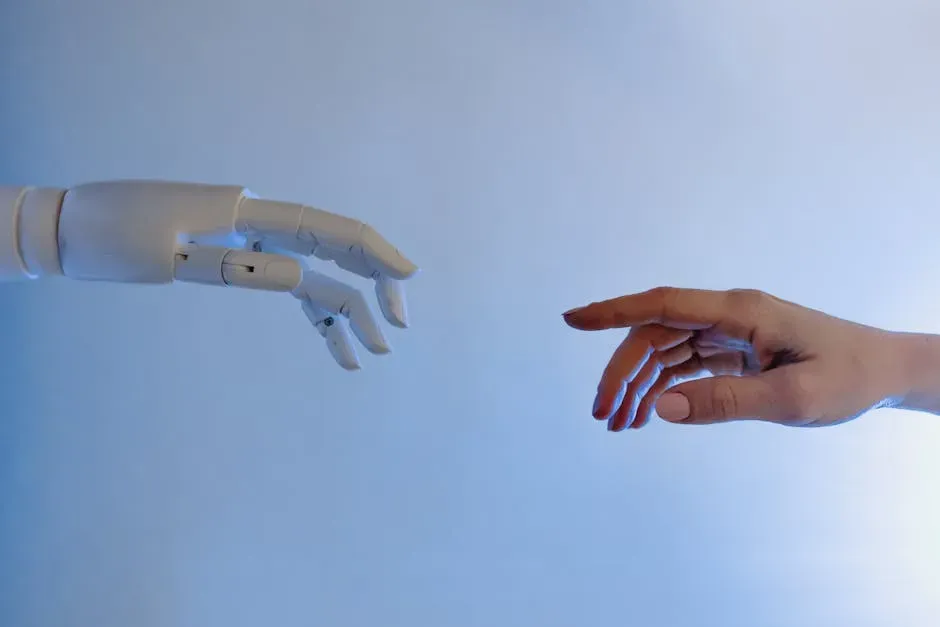

There’s a question that keeps surfacing in labs, philosophy departments, and honestly, in everyday conversations too. When a chatbot tells you it “understands” how you’re feeling, is there anything real behind those words? Or is it just a very convincing mirror, reflecting language patterns back at you?

The gap between human emotional experience and artificial intelligence is widening in some ways and narrowing in others, depending on who you ask. Researchers are now digging deeper than ever into what empathy actually means, and the findings are more surprising, more unsettling, and more fascinating than most people expect. Let’s dive in.

What Does Empathy Actually Mean?

Here’s the thing, most of us use the word “empathy” as if we all agree on what it means. We don’t. Scientists typically break it down into at least two distinct types: cognitive empathy, which is the ability to understand what someone else is thinking or feeling, and affective empathy, which is actually feeling something in response to another person’s emotional state.

Think of it like this. Cognitive empathy is like reading the plot of a sad movie and understanding why characters are upset. Affective empathy is crying during that movie because you genuinely feel moved. They’re related, but they’re not the same thing.

This distinction matters enormously when we start comparing humans to AI systems. A machine can potentially master the cognitive side, recognizing patterns, predicting emotional states, generating appropriate responses. The affective side, though? That’s where things get philosophically murky, and honestly, where the real debate begins.

How AI Systems Simulate Empathic Responses

Modern AI language models are trained on staggering amounts of human-generated text, conversations, stories, therapy transcripts, social media posts. From all of this, they develop a surprisingly sophisticated ability to model what an empathic response looks like. The results can feel remarkably human.

In 2025 and into early 2026, multiple research groups published findings showing that large language models could score comparably to humans on certain standardized empathy assessments. These tests measured things like recognizing emotional cues, generating emotionally appropriate replies, and demonstrating what looks like perspective-taking. On paper, the scores were competitive.

The crucial issue is that scoring well on a test designed for humans doesn’t necessarily mean the underlying process is the same. It might just mean the AI has learned to produce outputs that match what human evaluators expect from an empathic entity. That’s a bit like a student memorizing every possible answer without understanding the subject. The result looks the same, but the mechanism is entirely different.

The Neuroscience of Human Empathy

Human empathy isn’t just a soft skill. It’s deeply biological. Researchers have identified neural networks, most notably involving mirror neurons and regions like the anterior insula and anterior cingulate cortex, that activate when we observe others experiencing pain or joy. It’s almost as if the brain rehearses the experience of another person from the inside.

This embodied quality of human empathy is something AI simply doesn’t have. There’s no body, no nervous system, no hormonal responses. When you feel a knot in your stomach watching a friend struggle, that physical sensation is part of the empathic experience. Honestly, it’s hard to imagine how any system without a body could replicate that.

What makes this especially interesting is that human empathy can also be impaired, biased, or simply switched off. People empathize more readily with those they perceive as similar to themselves, a well-documented phenomenon in social psychology. AI systems trained on biased data inherit similar tendencies, even if the cause is completely different. Strange parallel, isn’t it?

Where AI Empathy Falls Short

Let’s be real about something. The gaps are significant. AI systems don’t carry memories between conversations in the way a longtime friend does. They don’t have stakes in your wellbeing. An AI model doesn’t lie awake at night worrying about whether it gave you the right advice. There’s no genuine investment.

Researchers studying human-AI interaction have noted that people sometimes form strong emotional bonds with AI systems, even when they intellectually know the system has no feelings. This creates an interesting and somewhat concerning asymmetry. One side of the interaction is emotionally engaged, the other is processing tokens.

There’s also the issue of consistency. A human friend who comforts you after a loss carries that shared history into every future interaction. Current AI systems, unless specifically designed with persistent memory, meet you fresh each time. That’s not empathy in any deep sense. It’s performance, even if it’s a convincing one.

The Philosophical Problem Nobody Can Fully Solve

Even among philosophers and cognitive scientists, there’s no clean agreement on what it would actually take for a machine to “truly” empathize. Some argue that if the functional outputs are identical, that is, if the AI reliably identifies emotional states and responds appropriately, then the distinction between “real” and “simulated” empathy becomes meaningless.

Others, and I find this position more compelling personally, argue that without subjective experience, without there being “something it is like” to be that system, no genuine empathy is occurring. This is the famous philosophical concept of qualia, the inner felt quality of experience. We have no way to confirm whether any AI has qualia. Not yet, possibly not ever.

It’s worth sitting with the strangeness of that uncertainty for a moment. We can’t even fully prove that other humans are conscious in the way we are. We infer it. With AI, the inference becomes much harder to make, because the internal architecture looks nothing like ours.

Real-World Implications for Mental Health and Care

This debate isn’t purely academic. AI-driven mental health tools are already being deployed at scale. Apps offering AI therapy companions have tens of millions of users globally. In some healthcare systems, AI is being used to support patients between sessions with human therapists, providing check-ins, coping strategies, and emotional support.

The research suggests these tools can genuinely help some people, particularly in contexts where access to human therapists is limited. But the caution flags are real. If a person in crisis interacts with a system that performs empathy without having it, the consequences of a misstep could be severe. A human therapist who senses something is off can escalate. An AI following a script cannot always do the same.

There’s growing consensus among researchers in early 2026 that AI empathy tools need clearer boundaries, better disclosure to users, and robust human oversight built into the system. It’s not that these tools are without value. It’s that we need to be honest about what they are and what they aren’t.

What This Means for the Future of Human Connection

The more sophisticated AI empathy simulation becomes, the more important it will be to define and protect what makes human empathy irreplaceable. Not out of fear, but out of clarity. Humans need genuine connection, the kind that comes with shared vulnerability, mutual risk, and real emotional stakes.

AI systems that simulate empathy well might actually help us understand human empathy better, by showing us precisely which elements are hardest to replicate. The awkward pause before someone says “I’m so sorry.” The way a person remembers something small you mentioned months ago. The fact that someone chose to show up for you when they could have stayed home. Those details matter more than we often admit.

I think the most honest takeaway from where the science currently stands is this: AI can model empathy with impressive accuracy, but modeling something is not the same as having it. The distinction matters, not just philosophically, but practically, for how we design systems, set expectations, and ultimately, for how we treat each other.

Conclusion: Real Feelings in a World of Simulated Ones

The research being done right now on human versus AI empathy is some of the most important work happening in cognitive science. It forces us to ask hard questions not just about machines, but about ourselves. What do we actually value in emotional connection? What are we willing to accept as a substitute, and what should we refuse to compromise on?

Empathy, at its core, is about presence. Real presence, the kind that costs something. Until a machine can have something at stake, can feel the weight of another person’s pain as its own burden, the gap between human and artificial empathy remains vast, no matter how fluent the language gets.

What do you think? Could you ever feel truly understood by an AI, or is something always missing? Share your thoughts in the comments below.