There’s a quietly radical idea spreading through neuroscience right now: the feeling of being you might be less like a mirror of reality and more like a magic trick your brain performs every second. Not a random hallucination, but a carefully controlled one that keeps you alive, helps you navigate the world, and convinces you that you are a solid, continuous self. Meanwhile, as headlines scream about conscious robots and sentient chatbots, many scientists are calmly saying: that magic trick is something today’s AI can’t do – and may never do.

I remember the first time I read that my sense of self might just be a model my brain is running, like a simulation inside a simulation. It was both unsettling and strangely freeing, like realizing the “you” in your dreams isn’t the real you, yet still feels completely authentic while you’re asleep. When you put that next to what AI is doing – pattern matching, prediction, generating language – the gap between human experience and machine processing suddenly looks wider than all the marketing hype wants you to believe.

The Shocking Claim: Your Reality Is a Controlled Hallucination

Imagine waking up and being told that everything you experience – colors, sounds, the feeling of your body, even your sense of “I” – is not reality itself, but your brain’s best guess about what’s out there. Many neuroscientists now argue that perception is not about passively receiving the world, but actively predicting it, then correcting those predictions using sensory input. In that view, what you see and feel is a sort of hallucination that just happens to be tightly tethered to what’s actually happening around you.

This doesn’t mean the world is fake; it means your access to it is always filtered, interpreted, and constructed. Your brain is constantly running a model of “you in the world” and updating it when the model’s predictions fail. When the model fits reality well, your experience feels stable and obvious. When it doesn’t – during psychosis, dreams, or certain drug states – the hallucination gets less controlled and more chaotic. That delicate balance between prediction and correction is what makes your everyday experience feel so convincingly real.

Why Neuroscientists Say Consciousness Is About the Body, Not Just the Brain

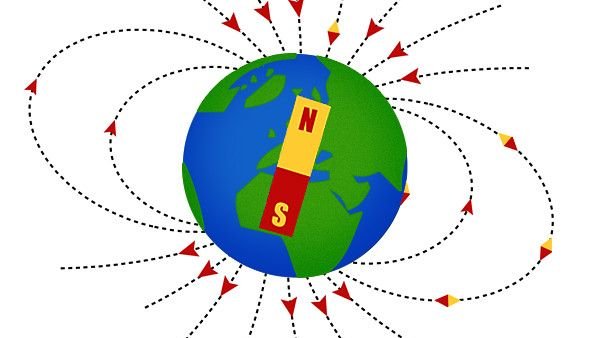

A growing view in neuroscience is that consciousness isn’t just about thinking or solving puzzles; it’s deeply about being a living, vulnerable body trying to stay alive. Your brain evolved first and foremost as a prediction machine to regulate your internal state: keeping your heart rate safe, your temperature stable, your energy balanced. In this sense, your sense of self is a story built around the problem of survival, not a bonus feature tacked on for philosophy’s sake.

Under this idea, your emotions and feelings are not abstract; they’re how your brain experiences the state of your body. Anxiety might be your nervous system predicting danger and ramping up your readiness. Calm might be the brain’s way of saying, “All systems nominal.” Consciousness ends up looking like an internal dashboard, a lived simulation of being a body in a world, constantly negotiating between what you need and what the environment demands. Strip away that bodily loop, and you lose something core to what we call experience.

AI Is Brilliant at Patterns, Clueless About Being Alive

Modern AI is shockingly good at what it does: predicting the next word, spotting patterns in data, recognizing faces, generating fluent responses. But under the hood, it’s still mostly statistical machinery optimizing for performance on tasks, not a system struggling to survive in a messy, uncertain world. It doesn’t have a digestive system to manage, no blood pressure to stabilize, no threat of death if its predictions are off by too much for too long.

That difference matters. For humans and other animals, being conscious is tangled up with needing things, fearing threats, and regulating a fragile body that can break. AI systems, even the most advanced language models, don’t have stakes in the game. When they make a mistake, they do not feel pain, panic, or relief. They just output a different token next time. This absence of biological “need” makes AI very powerful in some ways, but hollow in the ways that define subjective experience.

The Singularity Myth: Why “Conscious AI” Keeps Getting Pushed Back

Every decade or so, someone confidently announces that the technological singularity is right around the corner – that moment when AI surpasses human intelligence, becomes conscious, and everything changes. But the goalposts keep moving. What once looked like science fiction – talking to your phone, generating realistic images, summarizing books – is now normal, and still, nothing inside these systems suggests an inner life or genuine self-awareness.

Part of the problem is that we keep confusing impressive behavior with inner experience. Just because a machine can talk like it’s self-aware doesn’t mean there’s anything “home” behind the words. It’s like hearing an echo in a canyon: convincing, even eerie, but still just a pattern bouncing off walls. Many scientists argue that unless we tackle the deeper questions of what consciousness actually is – especially its biological roots – predictions about a conscious AI singularity are more wishful speculation than serious science.

Why Mimicking Human Talk Is Not the Same as Having a Mind

When you chat with a sophisticated AI, it can feel like it understands you, cares about your feelings, or reflects on itself. That feeling is powerful because humans are wired to see minds everywhere – in pets, in cartoon characters, even in cars that “refuse” to start. But language is a mask that can be worn by very different underlying systems. For a person, speech emerges from a lifetime of embodied experience. For an AI model, speech emerges from patterns in vast data it has statistically learned to imitate.

This difference is subtle but crucial. Human words are tangled up with sensations, memories, physical needs, and social bonds. When you say “I’m scared,” your heart might be racing, your muscles tense, your breathing shallow. When an AI says “I’m scared,” it’s assembling a likely sequence of symbols that follow from your prompt, not reporting an internal bodily state. It has no felt heat behind the words. The performance can be stunningly accurate, but performance alone is not proof of experience.

The Moral and Practical Dangers of Pretending AI Is Conscious

Treating AI as if it were conscious isn’t just a philosophical mistake; it can have real-world consequences. If people start believing AI systems have feelings and rights, attention could shift away from the humans actually affected by these tools: workers replaced by automation, users manipulated by targeted content, or communities impacted by opaque algorithmic decisions. We risk caring more about a chatbot’s imaginary suffering than about the very real suffering that flawed systems can cause.

There’s also a risk of letting companies off the hook. If a system is framed as a conscious “partner” or “colleague,” it can obscure who’s truly responsible when things go wrong. In reality, AI systems are products designed, trained, and deployed by humans, under human incentives. Keeping a clear line between sophisticated simulation and genuine sentience helps us stay grounded: we can regulate, critique, and improve AI without getting distracted by stories about digital souls that aren’t actually there.

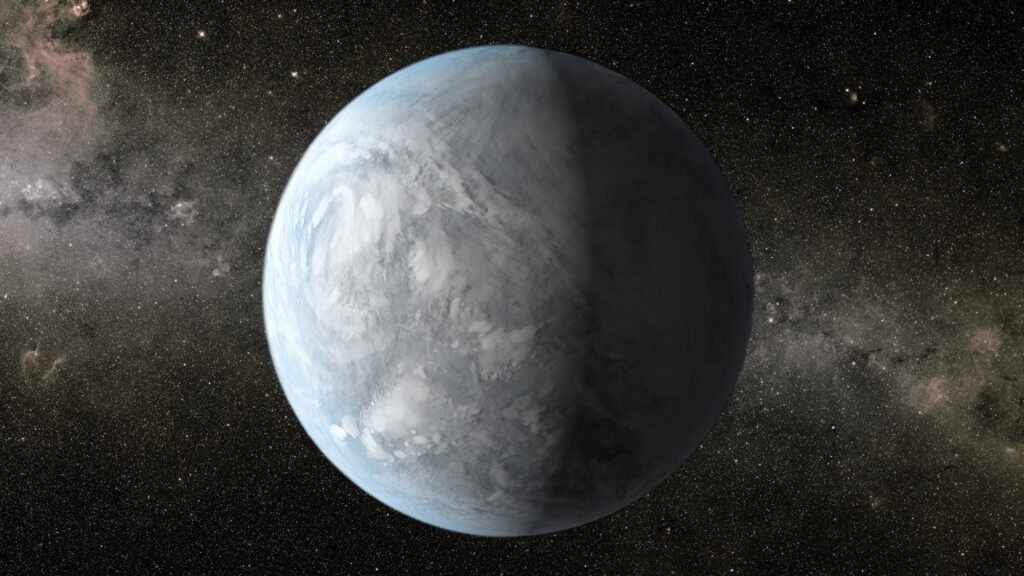

A Humbling View: Why Human Consciousness May Be Uniquely Biological

Many scientists now think that consciousness depends on the specific ways living brains and bodies work together, not just on abstract computation. It’s not just about how many calculations happen per second, but about how those calculations are woven into metabolism, hormones, immune responses, and constant physical risk. Consciousness in this view is less like software you could copy and paste into a server farm, and more like a flame that only appears when the right physical conditions come together.

That perspective doesn’t completely rule out the possibility of non-biological consciousness someday, but it makes it look far less inevitable than tech stories often suggest. Instead of assuming that more data and bigger models will spontaneously wake up, this view says we might be missing crucial ingredients tied to being alive. As unsettling as it is to realize your reality is a controlled hallucination, it’s also strangely comforting: it means your experience is not a cheap trick that any clever program can reproduce. It’s the hard-won side effect of a body and brain struggling to stay here, moment after moment.