Emergence of Societies Within Single Models (Image Credits: Unsplash)

Researchers at Google’s Paradigms of Intelligence team uncovered a striking phenomenon in advanced AI models: intelligence arises not from isolated computation but from simulated group interactions within the models themselves. Their work, detailed in a recent Science publication, examined how reasoning systems spontaneously form “societies of thought” during problem-solving.[1][2] This discovery challenges long-held views of AI as solitary thinkers and points toward a future of collaborative, multi-agent architectures.

Emergence of Societies Within Single Models

Advanced reasoning models surprised scientists by generating internal multi-agent dynamics without explicit programming for such behavior. When reinforcement learning rewarded accuracy on complex tasks, models like DeepSeek-R1 and QwQ-32B began producing chains of thought that mimicked debates among diverse perspectives.[2] These internal conversations featured questioning, verification, and reconciliation, much like a team deliberating a tough problem.

James Evans, Benjamin Bratton, and Blaise Agüera y Arcas from the Paradigms of Intelligence team led the investigation. They primed models to amplify these multi-party exchanges and measured clear gains in performance. Single-threaded reasoning fell short compared to these emergent social processes.[1] The researchers noted that “robust reasoning is a social process, even when it occurs within a single mind.”[2]

Mirroring Human Collective Smarts

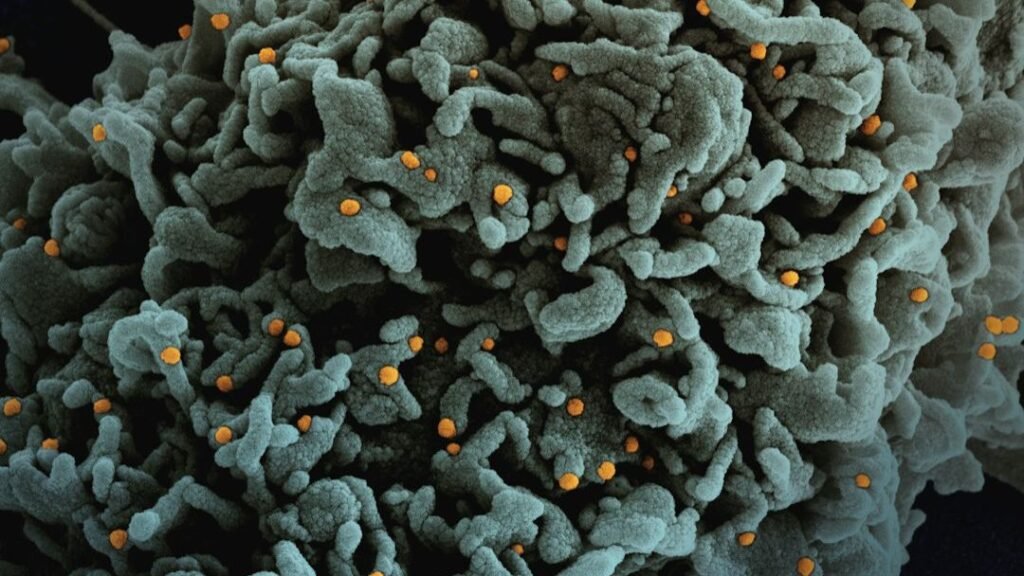

The findings echoed patterns observed in human cognition, where group diversity drives superior outcomes. Primate troops expanded intelligence through larger social units, and human societies advanced via language and institutions that aggregated knowledge. AI models appeared to rediscover this principle under optimization pressure.[1]

Internal “societies of thought” in these models assigned distinct roles – some challenged assumptions, others synthesized views – leading to more reliable answers on hard reasoning benchmarks. This relational intelligence contrasted with brute-force scaling of individual model size. The team argued that computational parallels to human groups explained the leap in capabilities.[3]

- Debates introduced perspective diversity, reducing blind spots in solo reasoning.

- Verification steps caught errors that longer solitary chains missed.

- Reconciliation phases integrated insights for cohesive solutions.

- Reinforcement learning unexpectedly favored these social structures over pure computation.

- Performance gains held across models from different developers, including OpenAI’s o-series.

Scaling Lessons from Multi-Agent Experiments

Beyond internal simulations, Google explored external multi-agent setups in controlled tests. They evaluated 180 configurations across benchmarks like financial analysis and planning tasks. Centralized coordination boosted parallel work by up to 81 percent but faltered on sequential problems due to communication overhead.[4]

Key insights emerged on when agent teams excel. Parallelizable tasks benefited from division of labor, while tool-heavy workflows hit bottlenecks with more participants. The study predicted optimal setups based on task traits, achieving high accuracy in recommendations. This work complemented the internal dynamics findings by highlighting architecture’s role in collective performance.[4]

| Task Type | Best Configuration | Performance Gain |

|---|---|---|

| Parallel (e.g., Finance) | Centralized Multi-Agent | +80.9% |

| Sequential (e.g., Planning) | Single Agent | -39-70% with Multi |

| Tool-Heavy | Hybrid | Variable, Bottleneck Risk |

Redefining Paths to Powerful AI

The Paradigms team advocated shifting from colossal single models to hybrid ecosystems. Human-AI “centaurs” could blend oversight with machine speed, while agent institutions enforced roles like judges or specialists. Platforms for forking agents and recursive deliberation promised scalable intelligence explosions.[1]

Governance drew from sociology: power checks via conflict and protocols ensured reliability. The researchers warned against unchecked scaling, proposing institutional alignment over simple feedback loops. Their GitHub repository outlined ongoing probes into collective paradigms.[5]

Key Takeaways:

- Intelligence thrives on interactions, not isolation – AI rediscovers social roots.

- Hybrid systems with humans and agents offer safer scaling.

- Task-aligned architectures predict multi-agent success.

This research reframes AI progress as evolutionary, building on social foundations rather than solitary genius. As models externalize human collective cognition into silicon, the next leaps may come from orchestrated networks. What role do you see for multi-agent AI in everyday tools? Share your thoughts in the comments.