Artificial intelligence has slipped into our lives so quietly that many of us only notice it when something goes spectacularly wrong – or astonishingly right. In just a decade, systems that once struggled to recognize a cat in a photo now help design drugs, steer cars, and even draft laws. For scientists, AI is less a single technology and more a new way of doing discovery itself, a kind of extra sense layered onto human perception. Yet its rise also exposes fault lines: bias baked into algorithms, jobs at risk, and decisions made by systems few people truly understand. The story of AI today is not just about faster chips or bigger datasets; it is about who we become when we share our world with machines that can learn.

The New Microscope: How AI Is Accelerating Scientific Discovery

Walk into a modern lab and the most important instrument may not be a gleaming microscope but a quiet server humming in the corner. Researchers now use AI models to sift through mountains of genomic data, telescope images, and particle collisions in ways that would have taken human teams years or even decades. In materials science, AI-driven systems have proposed new alloys, catalysts, and battery chemistries by exploring vast spaces of possible combinations that no human could systematically test. During the race to understand new pathogens, machine learning tools helped predict protein structures and highlight promising drug targets far faster than traditional methods. To many scientists, AI feels like upgrading from a candle to a floodlight in a darkened room of unanswered questions.

What makes this transformation so dramatic is not just speed but the kinds of questions researchers can now ask. Instead of running one careful experiment at a time, teams can simulate thousands of scenarios and let algorithms flag the most intriguing outliers. That shift turns the scientific method into something more iterative and exploratory, especially in climate modeling, astrophysics, and systems biology. There is a risk, of course, that scientists become overconfident in patterns pulled from noisy data, mistaking correlation for causation. But when used carefully – paired with rigorous experiments and skepticism – AI is becoming less a black box oracle and more a partner in reasoning, one that pushes us toward discoveries we might never have thought to chase.

From Clicks to Control: AI and the New Attention Economy

Every time you pause on a video, like a post, or hover over a product, an AI system is quietly taking notes. Recommendation algorithms now orchestrate a huge share of what people watch, read, and buy, turning human attention into one of the most carefully optimized resources on the planet. These systems learn subtle patterns – what you binge at night versus what you skim at lunch – and feed you content designed to keep you engaged for just a little longer. It works: platforms report that automated recommendations drive the majority of viewing hours and clicks, far more than simple human browsing would. The result is a media ecosystem where the dominant editor is no longer a human curator, but a constantly learning model tuned for engagement.

This shift brings real benefits and real dangers. On the positive side, recommendation systems can surface niche creators, obscure music, or highly specialized educational content that would otherwise be buried. At the same time, algorithms that learn from our past behavior can funnel us into echo chambers, amplifying outrage, conspiracy theories, or extreme views because they keep us scrolling. Traditional media gatekeepers had their own biases, but at least they were human biases we could interrogate directly. With AI in the loop, the logic of influence becomes more opaque, driven by statistical associations rather than explicit editorial judgment. Choosing how we design and regulate these systems has become a quiet but crucial front line in how societies form opinions and make collective decisions.

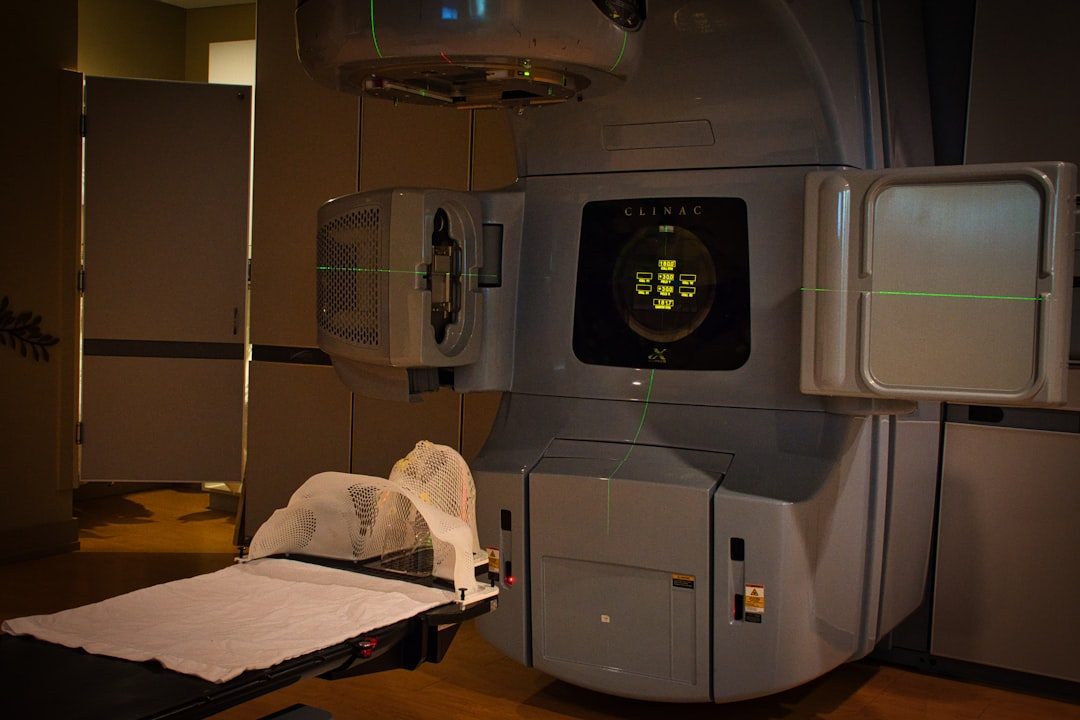

The Hidden Clues: AI in Medicine and the Fight to Predict Disease

In hospitals around the world, AI is starting to see things in scans and lab results that human eyes easily miss. Algorithms trained on millions of X‑rays, CT scans, and retinal images can pick up faint patterns that hint at early cancer, heart disease, or diabetes long before symptoms appear. Some systems have matched or exceeded specialist performance in narrow tasks, such as spotting tiny tumors or detecting early eye damage caused by chronic conditions. The promise is enormous: catch diseases earlier, personalize treatment, and free up overworked clinicians from routine image triage. For patients, that could mean fewer invasive biopsies, shorter hospital stays, and a better chance of surviving illnesses that once went unnoticed until it was too late.

Yet there is a tension lurking beneath the success stories. Many AI models in medicine are trained on data from specific populations, meaning they can struggle or misfire when deployed in regions with different demographics or healthcare practices. Regulators and clinicians increasingly demand clear evidence that systems work across diverse groups, not just in carefully controlled trials. There is also the issue of accountability: when an AI system misses a diagnosis, who exactly is at fault – the hospital, the vendor, the clinicians who trusted it? Despite these hurdles, the trend is clear: medicine is shifting from a reactive field, treating disease after it emerges, to a more predictive discipline where machines flag risks long before humans feel them.

From Ancient Tools to Modern Minds: Work, Creativity, and the AI Job Shake-Up

For most of history, new machines replaced muscle, not thought. Today’s AI tools cross that boundary, encroaching on tasks we once assumed were uniquely human, from drafting legal briefs to composing music. In offices, generative models now churn out first drafts of reports, code, marketing copy, and even slide decks in seconds, transforming how knowledge workers spend their time. Some companies report that routine drafting and data-entry tasks can be cut dramatically, letting employees focus more on strategy, client interaction, or high-level design. Others quietly use AI to handle tasks once sent to human contractors, shifting economic power toward those who own and operate the models themselves.

The creative fields feel this shift acutely. Illustrators, writers, and composers find themselves competing with systems that can mimic their style after ingesting vast troves of online content. At the same time, many artists treat AI like a new instrument: a synthesizer for ideas, capable of generating unexpected sketches, melodies, or story seeds that humans then refine. History suggests that new tools often displace some forms of work while spawning others, but the transition can be bruising and uneven. The key debates now revolve around fair compensation for training data, transparent labeling of AI‑generated content, and reimagining education so that people learn to work with these systems instead of being blindsided by them. In that sense, AI is forcing a deeper question about what parts of our work we truly value – and what we are willing to automate away.

Why It Matters: Power, Bias, and the Politics Inside the Algorithms

It is tempting to think of AI as neutral math, humming along above the fray of human politics. In reality, every system reflects choices about which data to use, which errors are acceptable, and which goals matter most. When algorithms help decide who gets a loan, which job applicants are shortlisted, or which neighborhoods receive extra police patrols, subtle biases in their training data can translate into very real harms. Studies of machine learning models used in hiring and criminal justice have found cases where they systematically disadvantaged certain racial or socioeconomic groups, even when sensitive attributes were not explicitly included. The patterns were smuggled in through proxies like postal codes, education histories, or employment gaps.

Traditional decision-making was also riddled with bias, of course, but at least it could often be challenged by confronting an identifiable person or policy. AI systems, by contrast, can hide behind complexity and corporate secrecy, making it difficult for affected individuals to contest decisions. This matters because as the models grow more capable, the temptation to offload hard choices onto them will only grow. A scientifically literate public debate about AI requires more than just marveling at new breakthroughs; it demands scrutiny of who builds these systems, who audits them, and who bears the consequences when they fail. The algorithms are not just technical artifacts – they are new levers of power that need the same level of democratic oversight as any other major infrastructure.

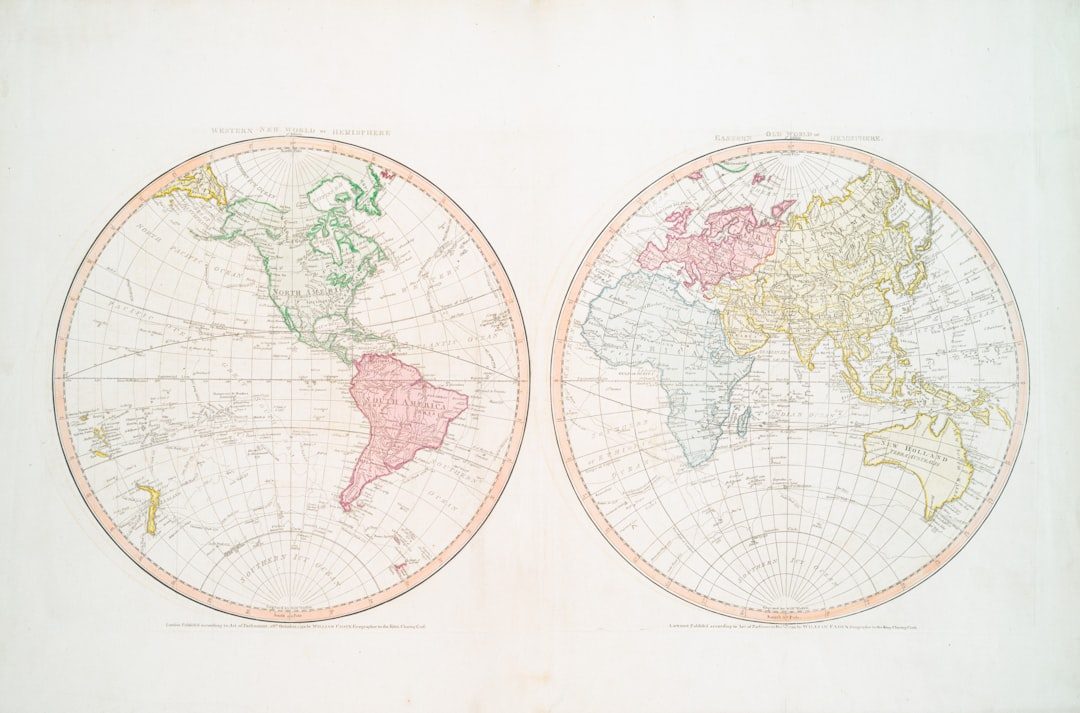

Global Perspectives: AI’s Uneven Map of Risks and Rewards

From a global vantage point, AI does not spread evenly; it pools where data, money, and compute are most abundant. A handful of countries host the largest model-training centers, while many others rely on imported systems whose inner workings they do not control. That imbalance raises tough questions about technological sovereignty: if critical services like translation, education, or even weather prediction depend on foreign companies’ algorithms, how much influence do local governments retain? At the same time, researchers and startups in developing regions are building their own models tuned to local languages, farming practices, or disease burdens, showing that AI can be adapted rather than simply imported. Projects in agriculture, for instance, use smartphone photos and simple diagnostic tools to help smallholder farmers spot crop diseases or optimize irrigation without needing expensive equipment.

There is another global wrinkle: AI runs on energy, and large models demand considerable electricity and cooling. As more sectors adopt heavy-duty computation, the environmental footprint of training and deploying advanced systems becomes impossible to ignore. Some data centers are experimenting with renewable power and more efficient chips, but the broader question remains about how to balance growth in AI with climate commitments. Meanwhile, international organizations and coalitions are racing to draft guidelines and voluntary frameworks for responsible AI use, though enforcement remains patchy. In many ways, AI has become a mirror showing where global cooperation is working – and where it is fraying under the strain of technological competition.

Silent Co‑Pilots: How AI Is Changing Everyday Life and Decision-Making

For many people, the most visible face of AI is not in a lab or data center but in their pocket or car dashboard. Navigation apps reroute commutes in real time based on traffic predictions, language tools turn awkward translations into fluent conversation, and digital assistants handle mundane tasks like reminders or shopping lists. These systems subtly shift our habits, encouraging us to trust algorithmic suggestions over our own rough guesses. Over time, that can change how comfortable we feel driving without GPS, remembering phone numbers, or even planning travel independently. AI becomes a kind of silent co‑pilot, shaping choices not through direct commands but through highly convenient nudges.

There is a psychological dimension to this shift that researchers are only beginning to unpack. Reliance on recommendation engines can create a sense that the world is curated just for us, blurring the line between genuine preference and algorithmically sculpted taste. At the same time, these tools can be a lifeline for people with disabilities, language barriers, or limited access to formal education, lowering barriers to information and services. The same system that fuels a late-night binge-watch can also enable a child to explore astronomy or a gig worker to navigate an unfamiliar city. The challenge is to design interfaces and defaults that support agency rather than eroding it, making the human–machine partnership feel like collaboration rather than quiet control.

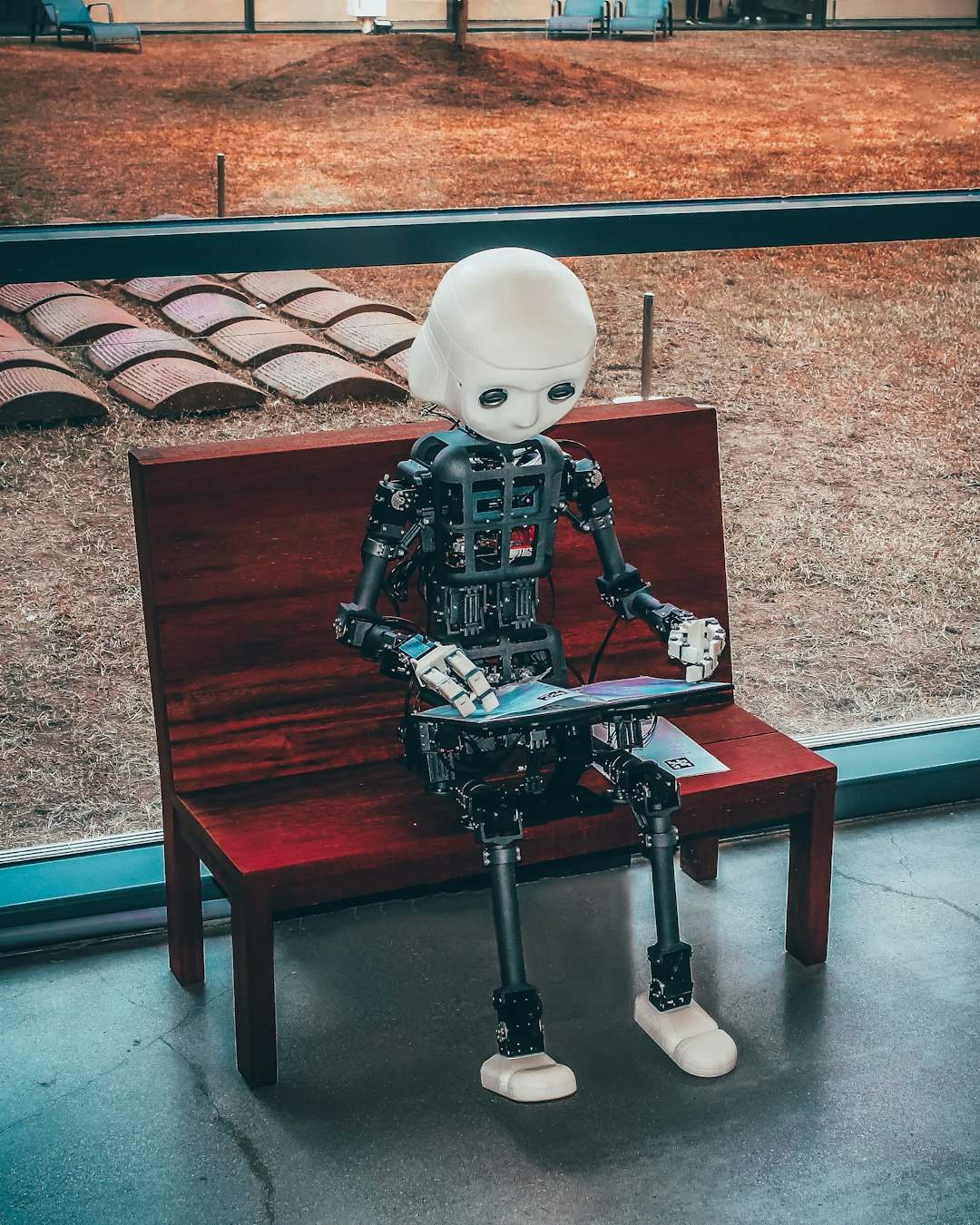

The Future Landscape: Smarter Models, Tougher Choices

Looking ahead, the trajectory of AI points toward systems that are more general, more dialog-capable, and more deeply integrated into physical machinery. Robots guided by advanced models will not just roll along factory floors; they may stock shelves, perform basic home assistance, or handle hazardous tasks in disaster zones. In science, models that can read papers, generate hypotheses, and propose experiments could become full participants in research teams, pushing the pace of discovery even further. At the same time, synthetic media tools will grow more polished, making it harder to tell authentic images, voices, or videos from fabricated ones. That will strain legal systems, journalism, and public trust in ways that are already visible but likely to intensify.

The technical community is also exploring ways to align powerful models with human values, building in safeguards so that systems behave predictably even in unfamiliar situations. This is not a solved problem; aligning complex, adaptive systems with the full diversity of human norms is an ongoing research frontier. Governments are beginning to roll out regulations that categorize AI systems by risk level, demand transparency, and require testing before deployment in sensitive areas like healthcare or education. Those rules will evolve as capabilities grow, in a feedback loop between scientific progress and social response. The future landscape of AI is less a straight line of invention and more a negotiation between what is possible, what is profitable, and what we decide is acceptable.

From Curiosity to Action: How Readers Can Navigate an AI-Shaped World

For all the grand narratives about AI, the most meaningful changes often start with small, personal choices. One practical step is simply to become more conscious of when and how you are interacting with algorithms, from search engines and recommendation feeds to workplace tools that suggest edits or rank performance. Paying attention to where these systems come from, what data they might use, and how their objectives shape your experience can turn passive consumption into active engagement. Supporting journalism, research organizations, and civil society groups that investigate and explain AI helps keep the public conversation grounded in evidence rather than hype. You do not need a computer science degree to ask pointed questions about accuracy, fairness, and accountability.

There are also opportunities to participate more directly in shaping how AI is built and deployed. Some projects invite volunteers to contribute data, test early tools, or offer feedback on how systems perform across different communities. Educators and parents can help the next generation treat AI as a powerful but imperfect tool – something to be interrogated and complemented, not worshipped or feared. On the civic side, engaging with policy discussions, public consultations, or local technology initiatives can influence how governments regulate and adopt these systems. Ultimately, AI is not an alien force descending from the cloud; it is a human-made technology that reflects our priorities and blind spots. The more we approach it with curiosity, skepticism, and a willingness to learn, the better chance we have of steering it toward outcomes we actually want.

Suhail Ahmed is a passionate digital professional and nature enthusiast with over 8 years of experience in content strategy, SEO, web development, and digital operations. Alongside his freelance journey, Suhail actively contributes to nature and wildlife platforms like Discover Wildlife, where he channels his curiosity for the planet into engaging, educational storytelling.

With a strong background in managing digital ecosystems — from ecommerce stores and WordPress websites to social media and automation — Suhail merges technical precision with creative insight. His content reflects a rare balance: SEO-friendly yet deeply human, data-informed yet emotionally resonant.

Driven by a love for discovery and storytelling, Suhail believes in using digital platforms to amplify causes that matter — especially those protecting Earth’s biodiversity and inspiring sustainable living. Whether he’s managing online projects or crafting wildlife content, his goal remains the same: to inform, inspire, and leave a positive digital footprint.